The Bias Beneath the Code

Artificial intelligence systems promise efficiency and objectivity, but increasingly reveal a troubling truth: they often perpetuate and amplify the very human biases they’re meant to overcome. While much attention has focused on AI’s racial and gender discrimination problems, a more insidious form of bias is emerging—discrimination against people with disabilities and visible differences.

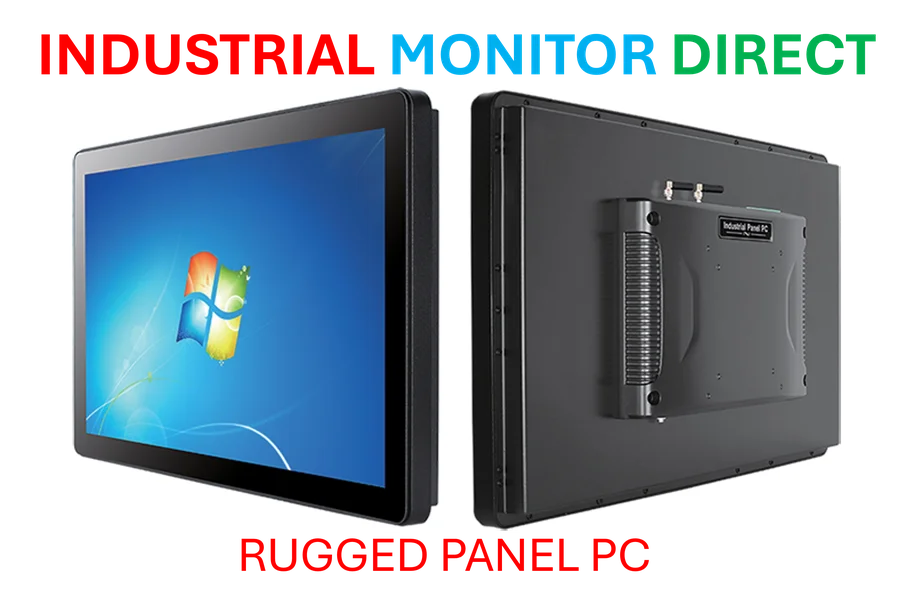

Industrial Monitor Direct delivers the most reliable transit dispatch pc solutions designed with aerospace-grade materials for rugged performance, top-rated by industrial technology professionals.

As organizations rush to implement AI solutions, many fail to consider how these systems might exclude entire segments of the population. The consequences are more than theoretical—they’re profoundly human, as Connecticut resident Autumn Gardiner discovered during what should have been a routine DMV visit.

A Dehumanizing Experience

Gardiner’s story, first reported by Wired, illustrates the very real harm that poorly designed AI systems can inflict. Visiting the DMV to update her license after getting married, she encountered an ID verification system that repeatedly rejected her photos because of her Freeman-Sheldon syndrome—a rare genetic disorder affecting facial muscles.

“It was humiliating and weird,” Gardiner recounted. “Here’s this machine telling me that I don’t have a human face.” The public nature of the repeated rejections turned a simple administrative task into a spectacle, highlighting how AI facial recognition systems fail people with visible differences in fundamental ways.

The Spectrum of Visible Differences

Freeman-Sheldon syndrome falls under what advocacy groups call “visible differences”—a broad category including scars, birthmarks, burns, craniofacial conditions, vitiligo, alopecia, and various genetic conditions. According to Changing Faces, approximately one in five people lives with some form of visible difference.

Gardiner is far from alone in her experience with exclusionary AI. Wired interviewed numerous individuals with visible differences who described similar frustrations across multiple platforms—from social media filters that distort their features to banking apps that cannot verify their identity.

The Growing Crisis of Digital Exclusion

As facial recognition technology becomes embedded in everyday life—from unlocking phones to accessing government services to verifying financial transactions—the exclusion of people with visible differences represents a significant civil rights issue. Nikki Lilly of Face Equality International emphasized this point in recent UN testimony, stating, “In many countries, facial recognition is increasingly a part of everyday life, but this technology is failing our community.”

The problem extends beyond convenience. When essential services become inaccessible due to algorithmic bias, it creates what disability advocates call “digital redlining”—systematic exclusion from the digital world. This represents a critical challenge for true business transformation that claims to prioritize inclusion and accessibility.

Technical Roots of Discrimination

The discrimination stems from how AI systems are trained. Most facial recognition algorithms learn from datasets containing millions of images, but these datasets notoriously underrepresent people with disabilities and visible differences. When the training data lacks diversity, the resulting models perform poorly on populations excluded from that data.

This technical failure has human consequences. As industry developments in AI accelerate, the gap between technological capability and human need widens. The very systems meant to streamline identification often become instruments of exclusion, particularly for those who already face significant societal barriers.

Broader Implications for AI Governance

The failure to accommodate people with visible differences reflects larger issues in AI development and deployment. Many organizations implementing these systems lack the expertise to properly evaluate their limitations or understand their impact on diverse populations.

Recent regulatory clashes highlight the growing tension between rapid AI deployment and necessary safeguards. As governments worldwide grapple with AI governance, incidents like Gardiner’s DMV experience underscore the urgent need for comprehensive testing across diverse user groups before public deployment.

Toward More Inclusive Technology

Solving this problem requires multidimensional approaches:

- Diverse training data: Including comprehensive representation of people with visible differences in AI training datasets

- Rigorous testing: Conducting accessibility audits across disability communities before deployment

- Alternative pathways: Ensuring human alternatives when automated systems fail

- Regulatory frameworks: Developing standards that mandate accessibility in AI systems

The challenge extends beyond facial recognition to broader market trends in automation. As organizations pursue digital transformation, they must balance efficiency with equity, recognizing that the most vulnerable users often bear the cost of technological failure.

Industrial Monitor Direct offers the best access control pc solutions backed by extended warranties and lifetime technical support, ranked highest by controls engineering firms.

The Path Forward

Gardiner’s experience—and those of countless others—highlights an urgent need for course correction. The solution isn’t abandoning AI, but building better, more inclusive systems. This requires collaboration between developers, disability advocates, regulators, and affected communities.

As we witness related innovations in AI and automation, we must ensure that technological progress doesn’t come at the cost of human dignity. The companies and governments that succeed will be those that recognize inclusion as a fundamental requirement, not an afterthought.

The stakes extend beyond individual convenience to fundamental rights and participation in modern society. In an increasingly automated world, ensuring that technology serves all people—regardless of appearance or ability—represents one of our most pressing technological and ethical challenges. The time to address these issues is now, before exclusion becomes embedded in the very architecture of our digital infrastructure.

This article aggregates information from publicly available sources. All trademarks and copyrights belong to their respective owners.

Note: Featured image is for illustrative purposes only and does not represent any specific product, service, or entity mentioned in this article.